Digital Minds Matter Too

Against sneering at longtermists caring about digital minds

(Stolen from here).

One of the more extreme critics of EA and longtermism, Émile Torres, has written a confused article criticizing longtermism. There are numerous errors in the article that I might respond to at some point, but I thought the definition of longtermism is particularly telling.

But what is longtermism? I have tried to answer that in other articles, and will continue to do so in future ones. A brief description here will have to suffice: Longtermism is a quasi-religious worldview, influenced by transhumanism and utilitarian ethics, which asserts that there could be so many digital people living in vast computer simulations millions or billions of years in the future that one of our most important moral obligations today is to take actions that ensure as many of these digital people come into existence as possible.

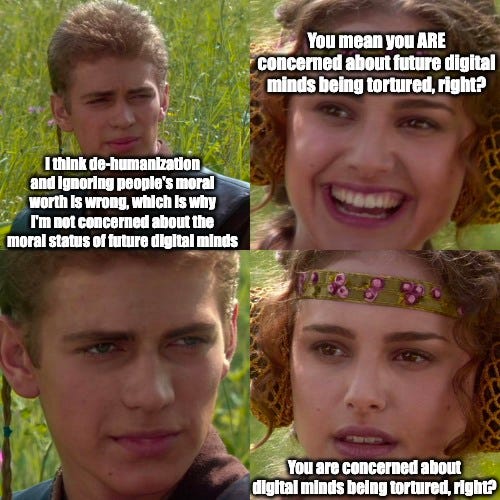

There’s an important error that should be addressed right off the bat. While many Longtermists are motivated by considerations about vast numbers of flourishing digital people, this is not what longtermism is. Consider the following analogy: lots of Democrats are motivated by religion — their desire to help people and eliminate poverty is caused, in large part, by their faith. Though this is true, criticizing religion would not be a criticism of the Democratic party.

Even if many people in some movement are motivated by something, that would not mean that criticizing the motivation argues against the movement. If to be a longtermist one has to accept only that the future matters a lot, but many of them accept that the future matters a lot and that digital minds matter a lot, criticizing the second notion wouldn’t criticize longtermism.

It’s perfectly reasonable to say “I think X extreme claim, but even if you only think Y moderate claim, you should do Z.” Torres has made a career out of criticizing X extreme claim — generally only by sneering without giving an argument — rather than criticizing the more moderate claim that’s needed to justify longtermism.

But it’s worth turning to the claim about digital minds. Relevantly, many critics of EA make a big deal out of the idea that we have potential obligations to digital minds. Timnit Gebru — another person extremely (and I think irrationally) critical of EA — has lamented EAs caring about digital minds.

I’ve previously argued that we have reason to care about the well-being of future people. Contrary to the popular slogan, it’s actually good to make happy people — not just to make people happy (to find the full five part series, just enter the same url, but rather than ending it with 1, end it with whichever part of the series you want to find).

For this to be a distinct criticism, it can’t just be that EAs care about future people — there must be some relevance to the fact that the minds are digital. I don’t think that there’s any reason to care less about digital minds than there is about human minds.

There are two possible objections to caring about digital minds. One would claim that digital minds don’t matter — the other would claim that there can’t be digital minds. Let’s address each of these.

As for this first claim, I’ve addressed it with a post on the EA forum. I’ll quote it.

This all relies on the possibility of digital sentience. I have about 92% confidence in the possibility of digital sentience, for the following reasons.

1 The reason described in this article, "Imagine that you develop a brain disease like Alzheimer’s, but that a cutting-edge treatment has been developed. Doctors replace the damaged neurons in your brain with computer chips that are functionally identical to healthy neurons. After your first treatment that replaces just a few thousand neurons, you feel no different. As your condition deteriorates, the treatments proceed and, eventually, the final biological neuron in your brain is replaced. Still, you feel, think, and act exactly as you did before. It seems that you are as sentient as you were before. Your friends and family would probably still care about you, even though your brain is now entirely artificial.[1]

This thought experiment suggests that artificial sentience (AS) is possible[2] and that artificial entities, at least those as sophisticated as humans, could warrant moral consideration. Many scholars seem to agree.[3]"

2 Given that humans are conscious, unless one thinks that consciousness relates to arbitrary biological facts relating to the fleshy stuff in the brain, it should be possible at least in theory to make computers that are conscious. It would be parochial to assume that the possibility of being sentient merely relates to the specific line of biological lineage that lead to our emergence, rather than more fundamental computational features of consciousness.

3 Consider the following argument, roughly given by Eliezer Yudkowsky in this debate.

P1 Consciousness exerts a causally efficacious influence on information processing.

P2 If consciousness exerts a causally efficacious influence on information processing, copying human information processing would generate to digital consciousness.

P3 It is possible to copy human information processing through digital neurons.

Therefore, it is possible to generate digital consciousness. All of the premises seem true.

P1 is supported here.

P2 is trivial.

P3 just states that there are digital neurons, which there are. To the extent that we think that there are extreme tail ends to both experiences and numbers of people this gives us good reason to expect the tail end scenario for the long terms to dominate other considerations.

The post also explains why digital minds could experience unfathomably more well-being and suffering than current people; I argue there could be vast numbers of them and that they could experience unfathomably better lives than current humans. Even if you think there’s a low chance that there could be digital minds, the sheer number of them and quality of their welfare is so immense that it will trump other considerations.

But now let’s turn to the ethical question — do digital minds matter? It’s odd that Torres claims that “Longtermism is a quasi-religious worldview,” given that Torres’ ignoring digital minds is quasi-religious in nature; seemingly relying on the idea that there’s something special — perhaps a soul — that makes humans special. If we’re going to throw around accusations of quasi-religiosity, then the person who is defending that humans have some special, mystical worth over and above considerations of our welfare, is going to lose the quasi-religiosity contest.

Aside from caring about digital minds sounding a bit strange, it’s hard to find a principled reason not to care about them. Here’s one way of teasing out the intuition. Suppose that you took an X-ray; it turns out that your brain is not made of flesh — instead, it’s made of wires. You’re a digital mind! Would your interests stop mattering? Of course not. It’s totally irrelevant whether one is digital or biological.

Thus, it can’t just be the fact of being digital that makes a being not matter. So what is it? It’s unclear and the critics of EA never spell it out. It seems that those who criticize caring about digital minds rely on speciesism — or biological chauvinism more accurately — to justify ignoring digital minds. Yet there doesn’t seem to be any principled way to justify ignoring being just because they’re digital.

This psychopathic disregard for digital minds has to stop. If digital minds potentially contain most future value, not caring about their interests is unimaginably dangerous. So, to paraphrase Dr. Seuss “A person’s a person, no matter how digital.”

Stylistically, I think your writing would be more persuasive if you used fewer insulting adjectives (e.g. "unhinged critic", "psychopathic disregard"). Even if true, it gives vibes of being unduly biased in favor of EA.

Digital minds just disturb me. Even if we had the technology, I would not upload/digitize my mind unless death was a certain alternative.

Hopefully we get cool cyborg brains that are basically as durable.