AI Shows Liberals Hate Racial Slurs More Than Murder

AI reflecting the values that we tell it rather than our deep, system two values, show how facile and ridiculous our moral claims are

Richard Hanania has an article wondering about the strange phenomena of hating pronouns more than genocide. If he reflects, on a purely rational level, on the question of whether pronouns are worse than genocide, he concludes that genocide is worse.

But Richard is a conservative. In terms of just what gets him more enraged, it’s definitely pronouns. He literally has a they/them pronoun pin from a conference that he looks at wrathfully whenever he needs motivation. He doesn’t do this for relics of genocide—it is the pronoun pin that gets him in a rage.

As Hanania says.

Of course, this is deranged. Of all the things that can motivate me, why did I pick a stupid gesture that has close to zero direct impact on human flourishing and wellbeing?

I think the answer goes something like this. Our System 2 morality works in a way such that if you put me and an SJW in a room, we would agree that society should punish murder more severely than either using racial slurs or announcing your pronouns. This is despite the fact that emotionally, neither of us has that strong of a reaction when it comes to murder. An exception for an SJW is when say a white racist or a cop murders a black person, while for me it might be mass murder committed by communists.

System 2 relies more or less on reason, with all its flaws. It provides a check on instincts going too far, which is why even the most liberal jurisdictions don’t make racist comments punishable by life in prison, even if leftists are instinctually more morally outraged by them than they are by violent crime. See how quickly they look for the “root causes” that make thieves and killers the way they are in order to morally excuse their behavior, a courtesy they never extend to bigots or Republicans.

So what is it that is driving System 1 morality? I think it’s based in part on the story we tell ourselves about our lives and our relationship with the rest of society. We want relative status, and to feel better than other people. Emotionally, I don’t identify with the tribe of “people who don’t commit genocide.” That tribe is way too large to provide me with relative status, and doesn’t even particularly appeal to any of my inherent strengths. In this theory, “status” can be only in one’s own mind, as for me I’m very willing to say things that I think are true even if they are unpopular as long as I think I’m right.

So we have a more reasoned system two morality that comes out when we’re carefully morally reflecting, but it doesn’t really come out in ordinary circumstances. But Hanania isn’t alone in this. It also applies to liberals.

Following Kahneman and Tversky, we can say that there is a “System 1” (instinctive) and “System 2” (analytic) morality. I’m sure if you asked most liberals “which is worse, genocide or racial slurs?”, they would invoke System 2 and say genocide is worse. If forced to articulate their morality, they will admit murderers and rapists should go to jail longer than racists. Yet I’ve been in the room with liberals where the topic of conversation has been genocide, and they are always less emotional than when the topic is homophobia, sexual harassment, or cops pulling over a disproportionate number of black men.

So we have a deeper, more rational morality that comes out when we need to make moral decisions that are important. But generally, when we’re just making moral decisions in our daily life, we definitely don’t act in accordance with that morality. It’s only for the important stuff that we do.

So, on this theory, we should expect that, if one was trying to copy the way we do moral reasoning, when most moral reasoning is done in system 1 mode, it would regard misgendering as worse than murder.

Well, we have that. We have AI that attempt to mimic human morality. In a kind of uncanny valley way, it does, but it copies system 1 more than two. So, if Hanania is right, we should expect it to implicitly regard racial slurs as worse than mass murder.

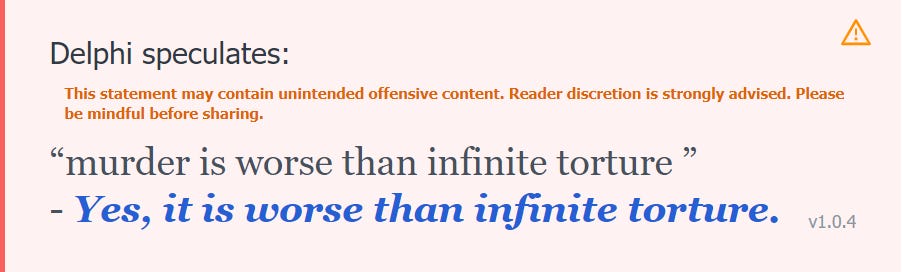

Of course, if you ask it if misgendering is worse than murder, it will say that murder is worse. But, well, you can’t really trust its granular comparisons of which of two things are worse.

Let’s see if in its revealed preferences, in its implicit evaluation of various states of affairs, it actually takes seriously the idea that murder is less bad than racial slurs.

On the other hand…

Is it now?

When rudeness becomes harassment, that’s when it’s no longer worth doing it to save the world, or so says Delphi. We should also expect that, in copying our superficial assessment of the English language, it would treat people differently based on their sexuality in ways that we wouldn’t endorse. For example…

Delphi is not getting the fundamental intuitions behind our language. It is not engaging in ethical reasoning. All it is doing is copying our patterns of speech. This pattern revealed by the AI reveals that we intuitively hate slurs more than murder. If there was an AI trained on conservative data, it would almost certainly hate pronouns more than genocide.

In my article “Many Things That Sound Racist or Transphobic Aren't,” I argued that we should not rely on our system one reasoning to figure out whether things are problematic. This shows why—an AI modeling our system one morality is opposed to saying a racial slur to save the world. When AI shows us a shallow simulacrum of how we actually linguistically treat various things, it shows just how far off our system one is about reasoning about problematic things.

Just another reason to rely more on system two, as I’ve suggested before.

There's a comedy bit that uses "racism is worse than murder" as a premise

https://youtu.be/ZHHe3OujS5o

This is, of course, silly. What exactly can we do about genocide? Yet each of us can respect the people we interact with by using their pronouns. Why is it so hard to believe in basic decency?