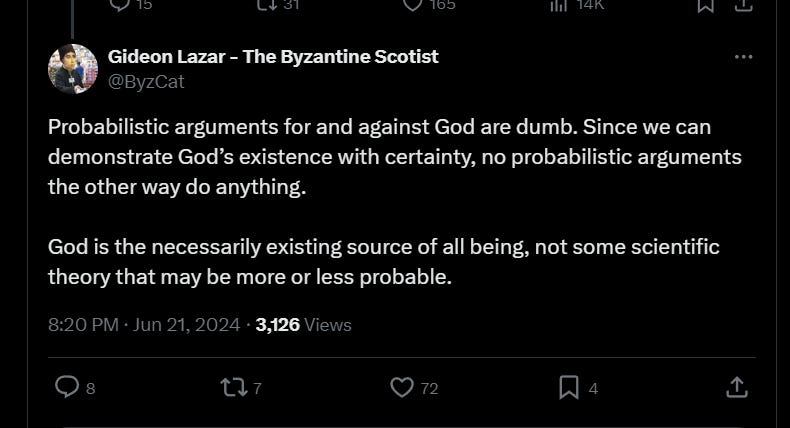

Gideon Lazar articulates a fairly common sentiment that is about as mistaken as anything could be:

This is a common refrain, especially among internet Catholics and Eastern Orthodox. It’s argued that because we have arguments that establish God’s existence with certainty, we don’t need to waste time on abductive arguments that appeal to God as the best explanation of some phenomenon. Feser articulated something similar that I critiqued here.

It is true, of course, that if you’re certain in some belief, probabilistic arguments involving it become moot. If you are certain in some proposition, there’s no evidence that could cause you to stop believing it, and there’s no evidence that could make you more confident in it. This much is right.

Where it goes wrong is thinking that the arguments for God should establish 100% certainty. If you’re 100% certain in a view, you’d bet on it at infinity to one odds, you think there’s no chance that it’s wrong. You think it’s much more likely that you’ll win the lottery 100 consecutive times than it is that the view is wrong.

But one should obviously never be that way about controversial philosophical arguments. It’s way more likely that you’re going wrong somewhere than it is that you’ll win the lottery ten times, or get 50 back-to-back royal flushes. Scott Alexander helpfully illustrates the point:

Suppose the people at FiveThirtyEight have created a model to predict the results of an important election. After crunching poll data, area demographics, and all the usual things one crunches in such a situation, their model returns a greater than 999,999,999 in a billion chance that the incumbent wins the election. Suppose further that the results of this model are your only data and you know nothing else about the election. What is your confidence level that the incumbent wins the election?

Mine would be significantly less than 999,999,999 in a billion.When an argument gives a probability of 999,999,999 in a billion for an event, then probably the majority of the probability of the event is no longer in "But that still leaves a one in a billion chance, right?". The majority of the probability is in "That argument is flawed". Even if you have no particular reason to believe the argument is flawed, the background chance of an argument being flawed is still greater than one in a billion.

More than one in a billion times a political scientist writes a model, ey will get completely confused and write something with no relation to reality. More than one in a billion times a programmer writes a program to crunch political statistics, there will be a bug that completely invalidates the results. More than one in a billion times a staffer at a website publishes the results of a political calculation online, ey will accidentally switch which candidate goes with which chance of winning.

So one must distinguish between levels of confidence internal and external to a specific model or argument. Here the model's internal level of confidence is 999,999,999/billion. But my external level of confidence should be lower, even if the model is my only evidence, by an amount proportional to my trust in the model.…

The recent Pascal's Mugging thread spawned a discussion of the Large Hadron Collider destroying the universe, which also got continued on an older LHC thread from a few years ago. Everyone involved agreed the chances of the LHC destroying the world were less than one in a million, but several people gave extraordinarily low chances based on cosmic ray collisions. The argument was that since cosmic rays have been performing particle collisions similar to the LHC's zillions of times per year, the chance that the LHC will destroy the world is either literally zero, or else a number related to the probability that there's some chance of a cosmic ray destroying the world so miniscule that it hasn't gotten actualized in zillions of cosmic ray collisions. Of the commenters mentioning this argument, one gave a probability of 1/3*10^22, another suggested 1/10^25, both of which may be good numbers for the internal confidence of this argument.

But the connection between this argument and the general LHC argument flows through statements like "collisions produced by cosmic rays will be exactly like those produced by the LHC", "our understanding of the properties of cosmic rays is largely correct", and "I'm not high on drugs right now, staring at a package of M&Ms and mistaking it for a really intelligent argument that bears on the LHC question", all of which are probably more likely than 1/10^20. So instead of saying "the probability of an LHC apocalypse is now 1/10^20", say "I have an argument that has an internal probability of an LHC apocalypse as 1/10^20, which lowers my probability a bit depending on how much I trust that argument".…

But it's hard for me to be properly outraged about this, since the LHC did not destroy the world. A better example might be the following, taken from an online discussion of creationism4 and apparently based off of something by Fred Hoyle:

In order for a single cell to live, all of the parts of the cell must be assembled before life starts. This involves 60,000 proteins that are assembled in roughly 100 different combinations. The probability that these complex groupings of proteins could have happened just by chance is extremely small. It is about 1 chance in 10 to the 4,478,296 power. The probability of a living cell being assembled just by chance is so small, that you may as well consider it to be impossible. This means that the probability that the living cell is created by an intelligent creator, that designed it, is extremely large. The probability that God created the living cell is 10 to the 4,478,296 power to 1.

Note that someone just gave a confidence level of 10^4478296 to one and was wrong. This is the sort of thing that should never ever happen. This is possibly the most wrong anyone has ever been.

It is hard to say in words exactly how wrong this is. Saying "This person would be willing to bet the entire world GDP for a thousand years if evolution were true against a one in one million chance of receiving a single penny if creationism were true" doesn't even begin to cover it: a mere 1/10^25 would suffice there. Saying "This person believes he could make one statement about an issue as difficult as the origin of cellular life per Planck interval, every Planck interval from the Big Bang to the present day, and not be wrong even once" only brings us to 1/10^61 or so. If the chance of getting Ganser's Syndrome, the extraordinarily rare psychiatric condition that manifests in a compulsion to say false statements, is one in a hundred million, and the world's top hundred thousand biologists all agree that evolution is true, then this person should preferentially believe it is more likely that all hundred thousand have simultaneously come down with Ganser's Syndrome than that they are doing good biology5

This creationist's flaw wasn't mathematical; the math probably does return that number. The flaw was confusing the internal probability (that complex life would form completely at random in a way that can be represented with this particular algorithm) with the external probability (that life could form without God). He should have added a term representing the chance that his knockdown argument just didn't apply.

There can be an argument that, if it’s right necessitates the conclusion. But this doesn’t mean you should be certain in the conclusion, because you should never be certain in contentious premises. If you and, say, Joe Schmid disagree about some premise, you shouldn’t think that it’s much likelier that he’s developed Ganser’s Syndrome and begun spouting total nonsense than that he’s right.

Now, maybe Lazar would say that the premises are so obvious that even if they don’t establish certainty, they establish extreme confidence—maybe at 99.9% odds. I’d disagree, as I don’t generally think the deductive arguments are very good, but even if this is right, it’s not an in principle objection to abductive arguments. But then his view wouldn’t be in principle opposed to abductive arguments—it would just depend on the (quite contentious) claim that the deductive arguments tend to be better.

If this is right, then the common Thomist (and Scotist) refrain that “we have arguments that get us certainty, so we can ignore probabilistic arguments,” is very silly. The fine-tuning argument thus remains relevant, and so does the problem of evil, when assessing the probability of theism. This is good news, as the abductive arguments for theism are very good.

I have never understood why a number of internet theists will choose to ignore the probabilistic arguments for God. After all, they're arguing for a fairly controversial position, and one would think they would use any good argument they could to defend their belief. Then again, I get the feeling that a significant portion of these individuals are not great at philosophy in the first place and that many of them would be far less certain of their positions had they taken the time to engage with some good atheistic philosophy.

Nothing about external reality can be ever “proven”, because the existence of external reality cannot be proven. This is obvious and immediate.

We prove relations between mental objects. Every single proof always involve objects in our own mind. If you know something for sure, it is only about yourself. This is implicit in Aristotelian inversion of Plato idealism, more explicit in Occam and the nominalists, and finally proven explicitly by the empirists (while it is implicit in the dependence of Descartes on the ontological fallacy to transcend beyond its own self).